Can confirm! ![]()

Check out, “Bastard Of The Apocalypse, The Earth Died Screaming” by Chuck Rogers! The second in the series just came out, “Children Of The Sea” It’s also available on GraphicAudio.net . I’ve made friends with the author and he is going to write me in to a future book in the series.

You’re already all over the place!

I already saw you in a band:

And even in a Dutch horror movie about Sinterklaas (the Dutch Santa Claus)

Hey, I’d push back a bit on the “AI is a black box” framing. A lot of experts love to present it like this, although it’s really not as black boxy as it seems. With transformers, we actually know quite a lot about what happens under the hood mathematically. The architecture, the matrix multiplications, the attention operations, the training objective. Well, those parts are completely transparent. What we don’t fully understand is how the internal representations end up encoding things that are not explicitly linguistic. LLMs aren’t really trained on “language” in the strict sense (syntax, semantics, grammar). They’re trained on human output, which carries a lot of statistical structure produced by embodied agents interacting with the world. In other words, text contains traces of perception, action, social behavior, and reasoning patterns.

Transformers end up learning statistical regularities of that entire mixture. Because the model itself doesn’t have grounding in perception or embodiment, those patterns get encoded purely as geometry in a high-dimensional vector space.

So when people inspect activations across layers and see strange transformations, the opacity isn’t because the math is mysterious. It’s because those internal directions correspond to regularities that don’t map cleanly to human-interpretable concepts.

The “black box” is less about unknown mechanisms and more about interpreting what structures the model discovered in that space.

That’s all true but the end result is the same - it is difficult to impossible to work backwards from the model state to what in the tuple of the training data, initial conditions, and prompt led do a particular reasoning output.

That sounds a lot like chaos theory. I know next to nothing about AI so this might be a super dumb question but do you see representations of phase space as it relates to attractors in AI? Similar to the ways they present in chaos theory?

This I don’t know (other than all systems should have a phase space representation; I have no idea if getting to that representation is even tractable for an AI model).

There’s a bit of chaos during the training phase, but not during the inference stage of the operational model’s forward passes. They are complex, but still a static map. They miss the non-linear recurrent evolution of weather systems or fluid dynamics.

If that were the case, the whole line of research that tries to address the black-box nature of transformer output would be pointless.

Transformer tokens are embedded in a high-dimensional, non-orthogonal vector space. Every position in the matrix is computed against thousands of other tokens, every time. Because of that, we are not quite sure how the non-linguistic part of human output is distributed across the whole matrix, what’s even worse, the statistical encoding is also distributed across different layers. But! Atm. We’re trying to partially decode this through stacking transformers with highly orthogonal VSA vector spaces derived from a combination of language compositional rules and PFC sequencing derivations. When you then process a text through this stack, you get a translation map of drift between these two vector spaces that represent the “magical” drift we see in transformers. It’s still not pure semantics, but it’s interpretable in the sense that it shows the statistical trace of non-linguistic embeddings we’re so mystified with in transformers.

@wellbi - are YOU an AI?

Your whole explanation sounds so fascinating that I start to get interested in neural networks (again).

Can you suggest good introduction literature. I stopped reading about it in the beginning of the 90s, when we still worked with Hopfield networks and sloooowlyyy transitioned to thermodynamic concepts (which I hated!).

Reading your stuff makes me realize that a) either you made all this up or b) a lot of stuff has happened since I stopped being interested in neural networks.

If it’s the latter: more input! If it’s the former: great joke ![]()

Well, the issue is that even today, if your field of study will be pure neural networks, you will end up doing scaling, bolting on modules and tuning of math. I work on neuromorphic and neuro-symbolic computing systems.

ISTF (Input-to-State Transfer via Forcing class of functions.): a transient forcing on ongoing dynamics. Nothing is stored or encoded.

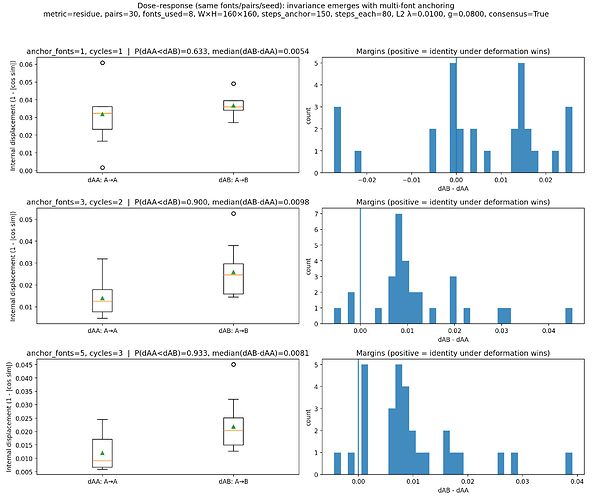

The system was exposed to the letter A rendered in multiple fonts. These images were generated externally and acted only as forcings. There were no labels, no averaging, and no stored exemplars.

After this anchoring phase, two transitions were compared:

A → A (new font)

A → B (same font)

Comparing A→A across fonts to A→B within the same font isolates identity from appearance: the former preserves identity while maximizing visual change, the latter preserves appearance while breaking identity, making a representational explanation maximally implausible.

The metric was purely geometric: internal state displacement.

After multi-font anchoring, A → A reliably induced smaller displacement than A → B. This effect did not appear after a single exposure and strengthened with structured repetition.

No representation of “A” was formed. Instead, repeated related forcings constrained the system’s dynamics, producing a basin of low displacement for A-like perturbations.

Generalization emerged as stability under deformation, not as abstraction over instances.

Because the letters acted only as forcings and not as inputs, representational explanations are excluded. The result reflects a change in internal geometry, not stored content.

In this system, generalization is not applied after learning. It is the form that learning takes.

When a new input arrives, the system does not begin by encoding its details. The initial response is dominated by the existing geometry of state space: the system is first driven into the nearest compatible basin shaped by prior forcing. Only if the forcing persists do finer, instance-specific constraints accumulate and further deform the dynamics.

In this sense, the system first stabilizes on generalized identity and only later resolves particular structure. This ordering is not a design choice but a consequence of continuous dynamics under forcing.

Here’s for example a little note on current engineering I am working on with my team. How generalization of visual inputs, similar to biological systems, can be embedded in artificial systems.

Neural networks are great, but they are architecturally limited in what they can do. They work in static, discrete timestep fashion, so a lot of “biology-like” processing is simply out of reach for them. So, there’s a major push, outside of commercial sphere for hybrid architectures that will combine static embedding of transformers with underlying dynamical systems.

@wellbi - the funny thing is: I understand (almost) nothing you’re saying ![]()

It’s very frustrating, as I was on the forefront of NN research in Holland, end of the 80s / beginning of the 90s.

It’s the first time since about 30 years that I got interested in reading about it. I’m still very critical of AI (in the sense of: we will al die!!!), but I do like the theory behind it…

Therefore my request for introduction literature … the books I have at home are very outdated, when I compare what’s in them with what you write here…

I think this stack should give you a good starting point:

Steven Strogatz - Nonlinear Dynamics and Chaos, Melanie Mitchell - Artificial Intelligence: A Guide for Thinking Humans, Chris Elismith - How to Build a Brain

Nielsen’s Neural Networks and Deep Learning, and whatever related to transformers/LLMs by Jay Alammar

And then I would read anything based on Kanerva’s HDC paradigm. I think the books above are a good set, BUT! I am not that much into reading books on this topic. I am much more into papers.

Cool - I am a thinking human. This book is for me.

Thanks!

Hey @wellbi, thanks for the food for thought which, if I’m honest, went completely over my head lol. I’m not an expert in AI research, it’s not my field (and frankly even in my field I wouldn’t claim to be an expert). But I tried to present a short-ish summary like @Whying_Dutchman asked for and that’s the best I could come up with. The book goes into a lot more detail about a lot of things and I’m sure you’d get even more out of it than I did.

Having said all that, I’m just going to echo @howard: I personally worry that it’s nigh impossible to figure out EXACTLY what prompted a particular output and of course, the result of that is you can’t engineer out (or in, for that matter) particular behaviours in a super AI. Thanks though for all the book recommendations as well!

Ah, welcome!

Somehow I had to think about this video, don’t know why:

You and me at both sides, @wellbi in the middle ![]()

Hahaha, that’s pretty apt ![]()

Anyway, so as not to hijack the topic too much and make it completely about AI, I’d like to mention I’m currently reading this one: The Practice: Shipping Creative Work by Seth Godin | Goodreads

Many people (Josh included) have talked on the forum about Dr Molly Gebrian’s book on practice. This one is also concerned with “practice”, but in a less scientific, more abstract way. It talks about how practice is all you can do really if you care about your craft and challenges the outcome-focused approach of doing anything. Interesting read and is broken up into tiny chapters, very easy to pick up.

@wellbi this was not the response I expected, and whilst I understood all of the words you used, it was the order that you used them in that left me somewhat confused. A lot like when I read A Brief History of Time; it was the imaginary numbers bit that freaked me out, just so you know.

When you mention ‘biology like processing’ is that in line with the way our brains use pattern recognition to identify things visually, rather than individually processing all of the data? Gross simplification but I’m sure you understand the mechanism I’m referring to.

Also for anyone wanting a masterclass in how to expose your own ignorance, just read my posts and follow my example!

I just read The Beach by Alex Garland…fun, trippy read. I really dig Garland’s movies (Ex Machina, Annihilation, 28 Days Later) and show (Devs) so decided to check out his novel. I would describe it as a Lord of the Flies style descent of an “idyllic” beach community on an uncharted island in Thailand. This was turned into a movie, but I haven’t seen that. Really enjoyed this novel.

Devs was soooooo good. Need to watch that again.

Hey,

The output transformers are trained on isn’t the language as we understand itwhen we say “language”. Grammar, semantics, etc. … Transformers learn to produce the final output. LLMs have “statistical mastery of a medium saturated with semantic residue.” The medium is the linguistic output of a dynamical, complex, continuously running, temporary thick system. When you speak, think, etc., what comes out isn’t a sequence of discrete tokens one after another. Anything you produce is entangled not only with the rules of language, but also with the state of your system as a whole. (Your mood, the hormonal response to past utterances, the situation you are in, etc., etc.) A lot of these realities are derivable from the series of tokens, but a lot of meaning is encoded in this interplay between biology and language. One of the core aspects of the output is the fact that we’re temporarily thick agents. We don’t have a point like now. ( I go into more detail here: The Specious Present as a Cost Surface - by Tomáš Nousek ) Transformers, when confronted with training data, go over it and, through sheer brute force and computation, find out the regularities and correlates that are in biological systems, for example, anchored purely in the dynamical interaction of activations/integration/dwelling in the specious present and fold them into the geometry of its vector space. So, we can see a weird geometric transformation and drifts that seem completely non-linguistic, but they are, probably, just the transformer using its tools to encode something that it doesn’t have.